MathCrew

An open-source AI math tutor for kids, Grade 1–6.

Self-host today, cloud version coming soon.

An open-source AI math tutor for kids, Grade 1–6.

Self-host today, cloud version coming soon.

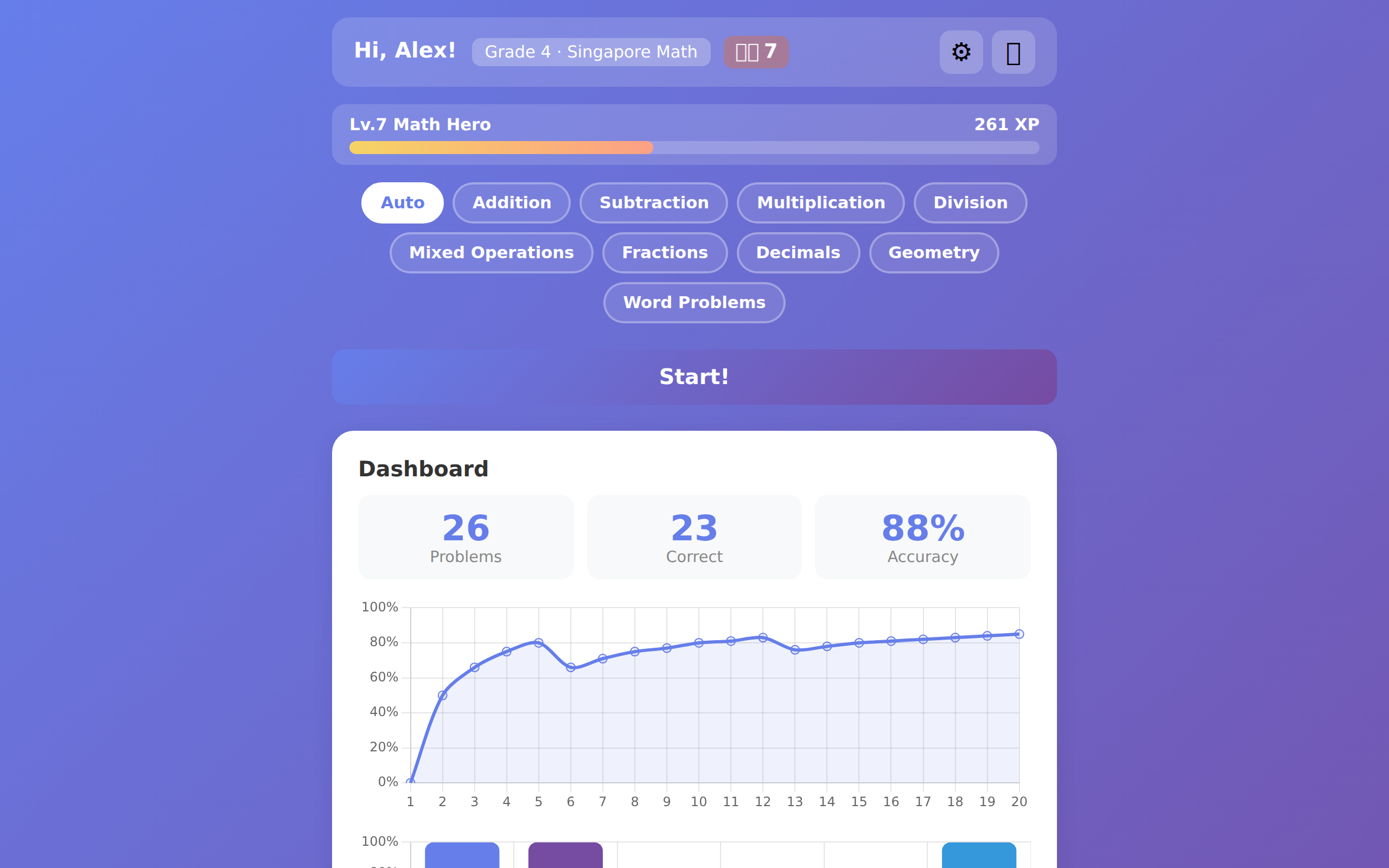

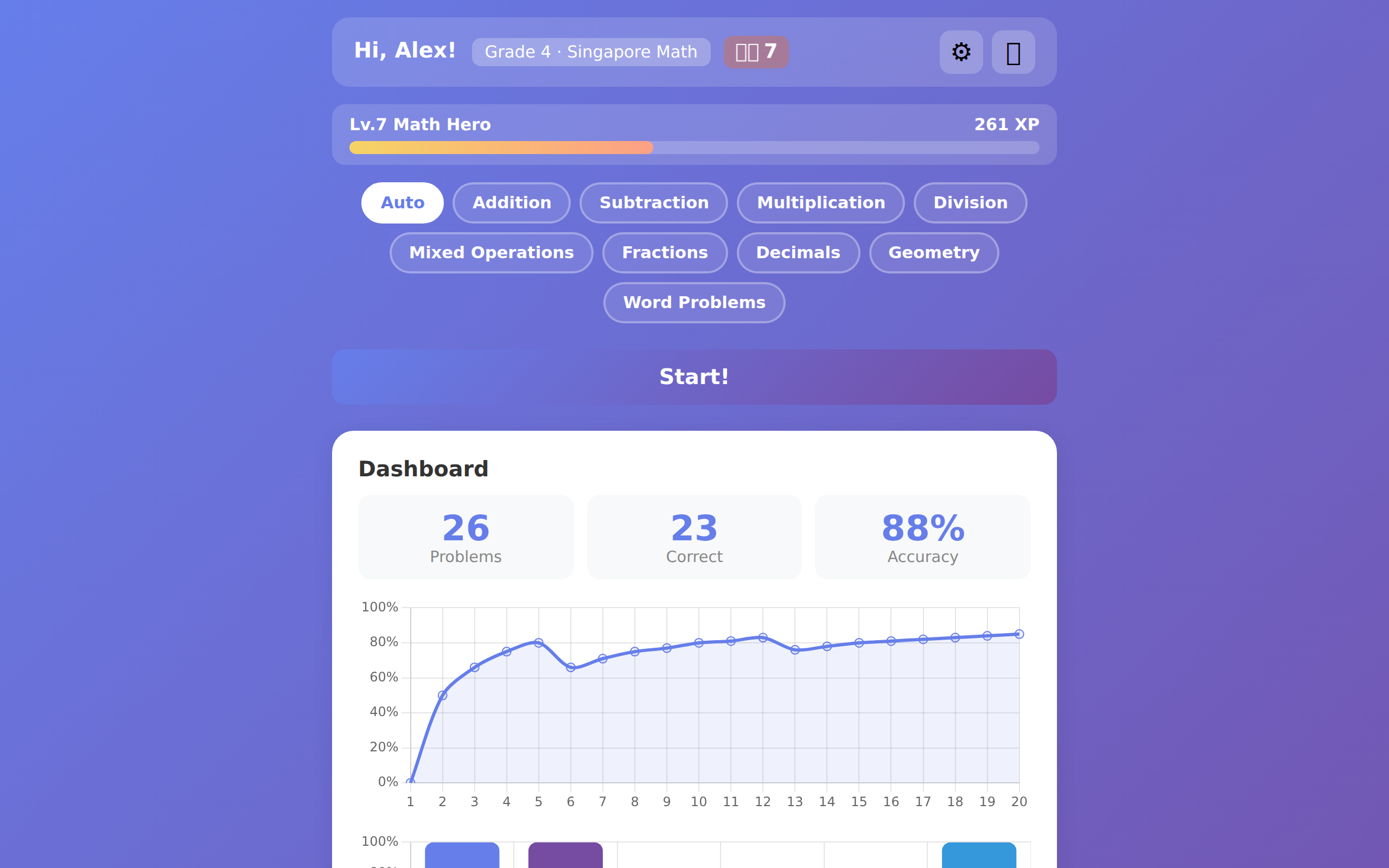

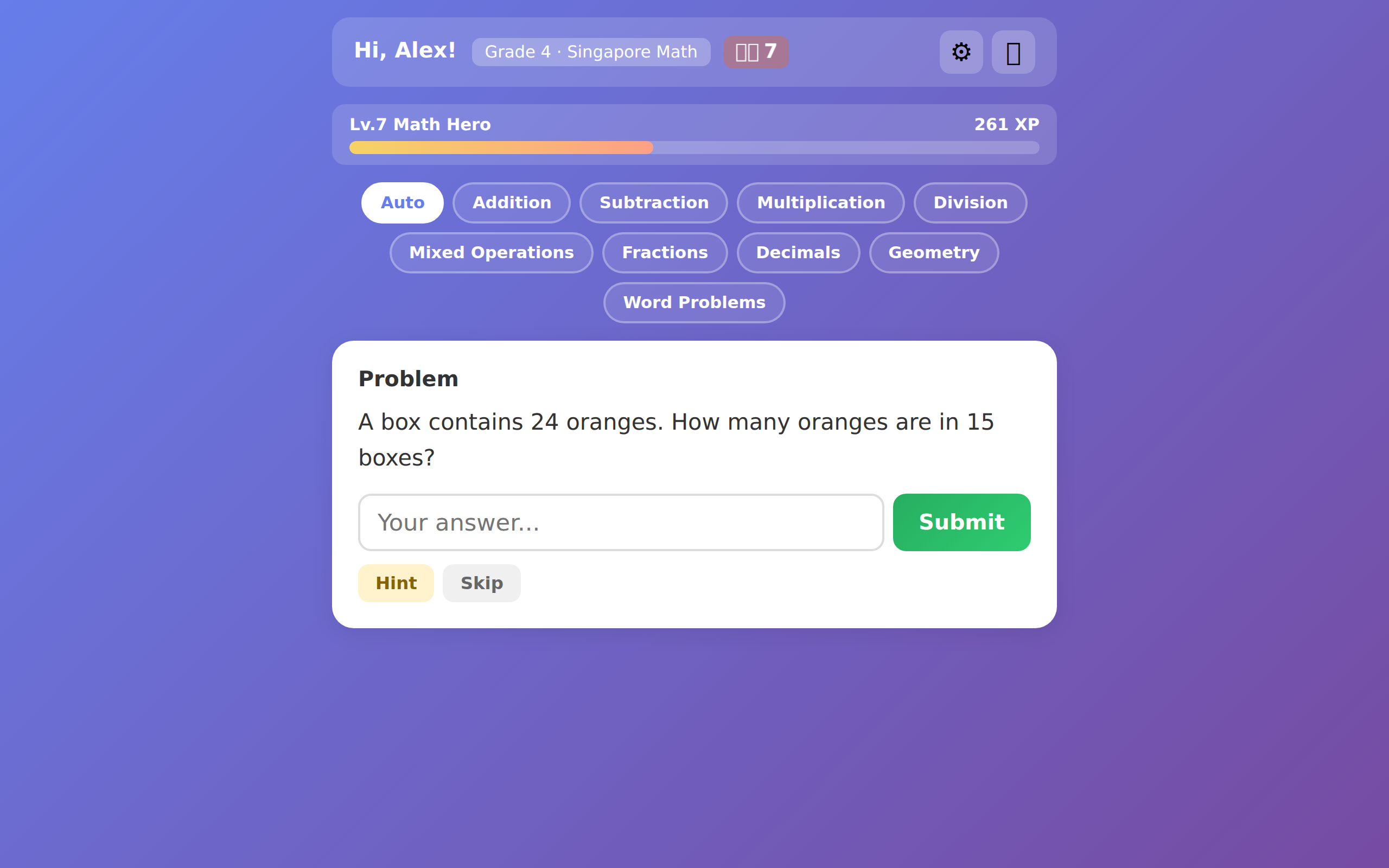

A multi-agent AI tutor that adapts to your child's learning pace, identifies mistakes, and makes math fun.

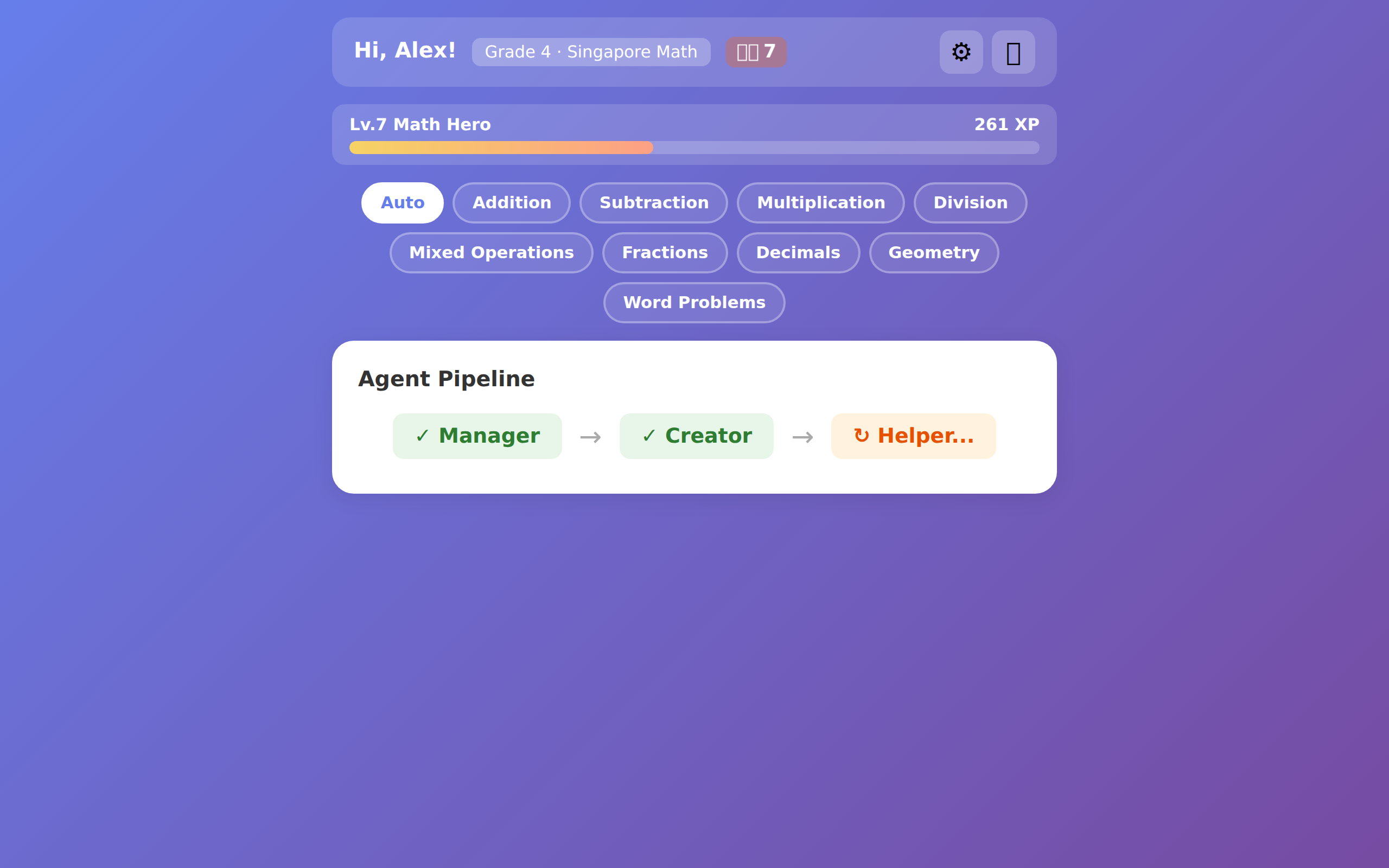

Manager analyzes history, Creator generates problems, Helper gives feedback, Analyst identifies misconceptions — all working together.

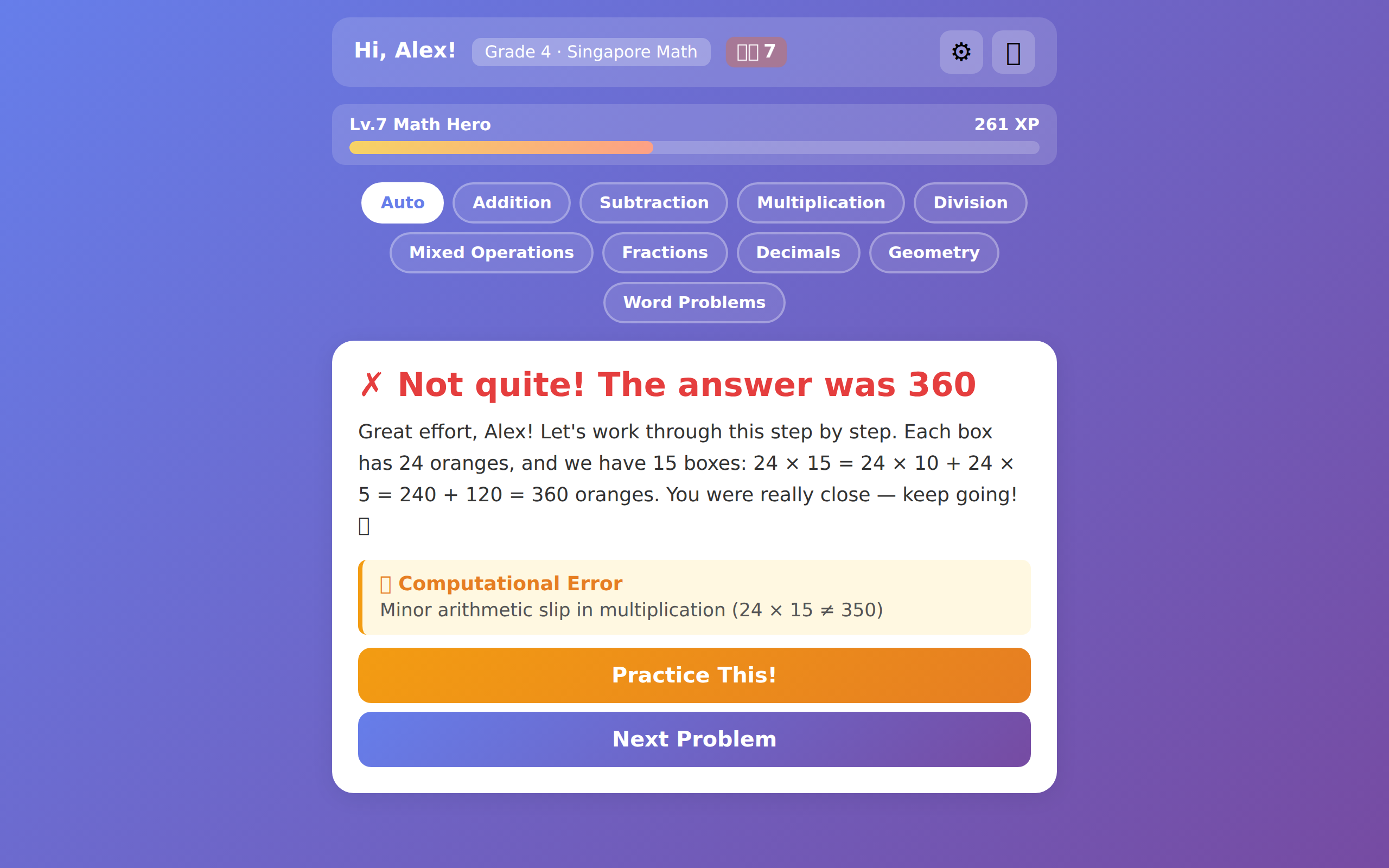

Wrong answers trigger error analysis (computational, conceptual, procedural, careless) and auto-generated practice problems.

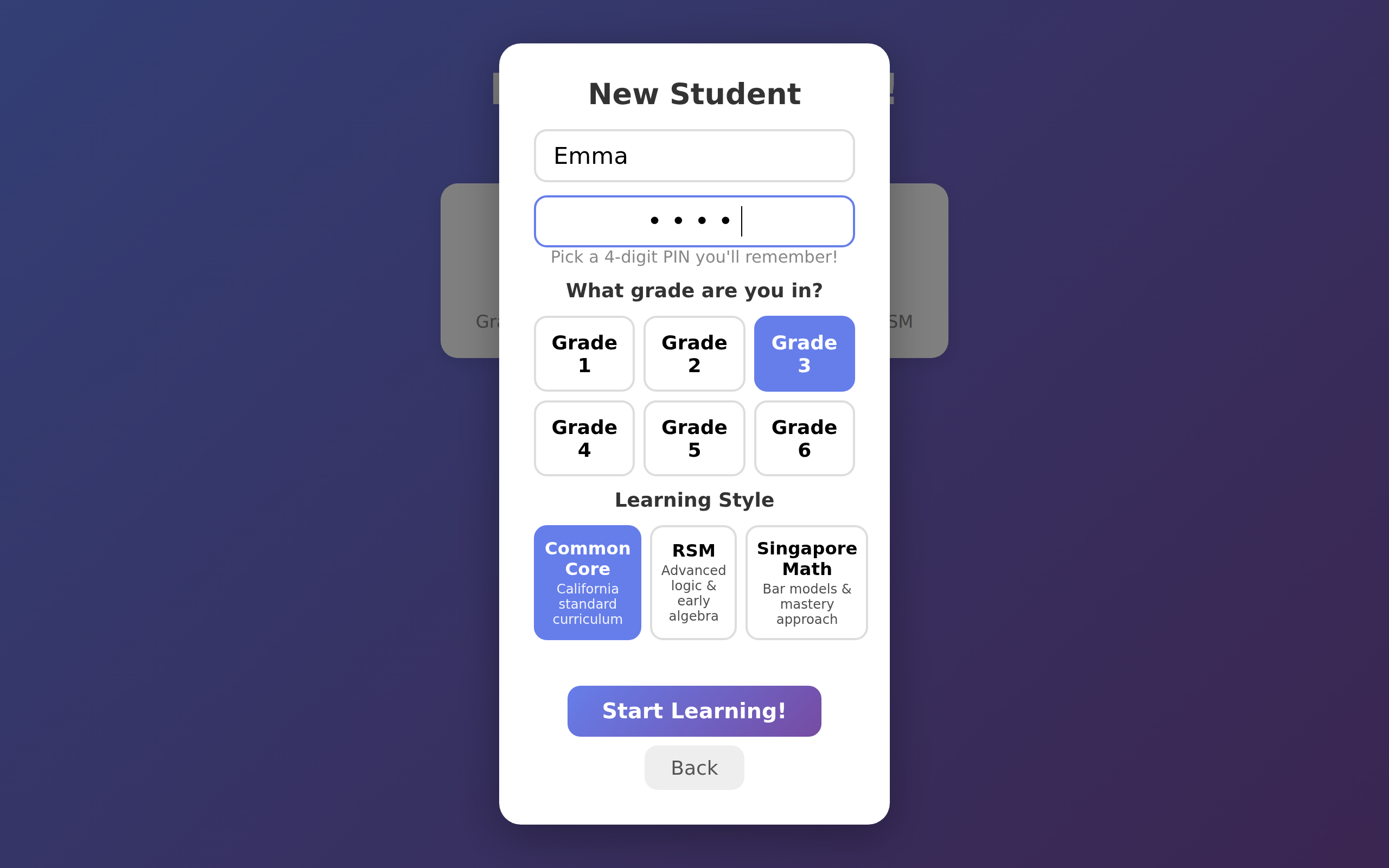

Common Core, RSM (Russian Math), or Singapore Math — each with grade-specific scope and pedagogy.

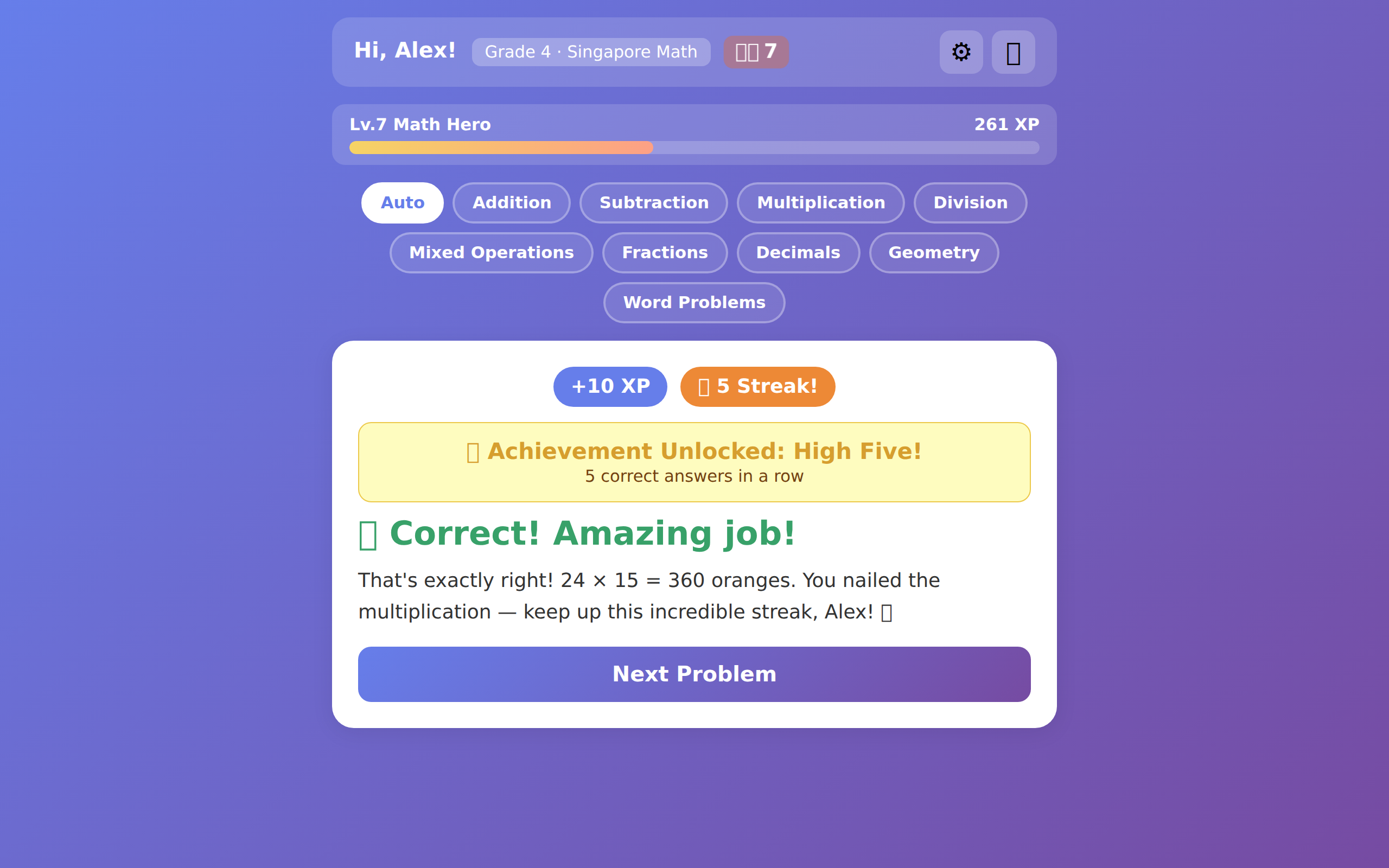

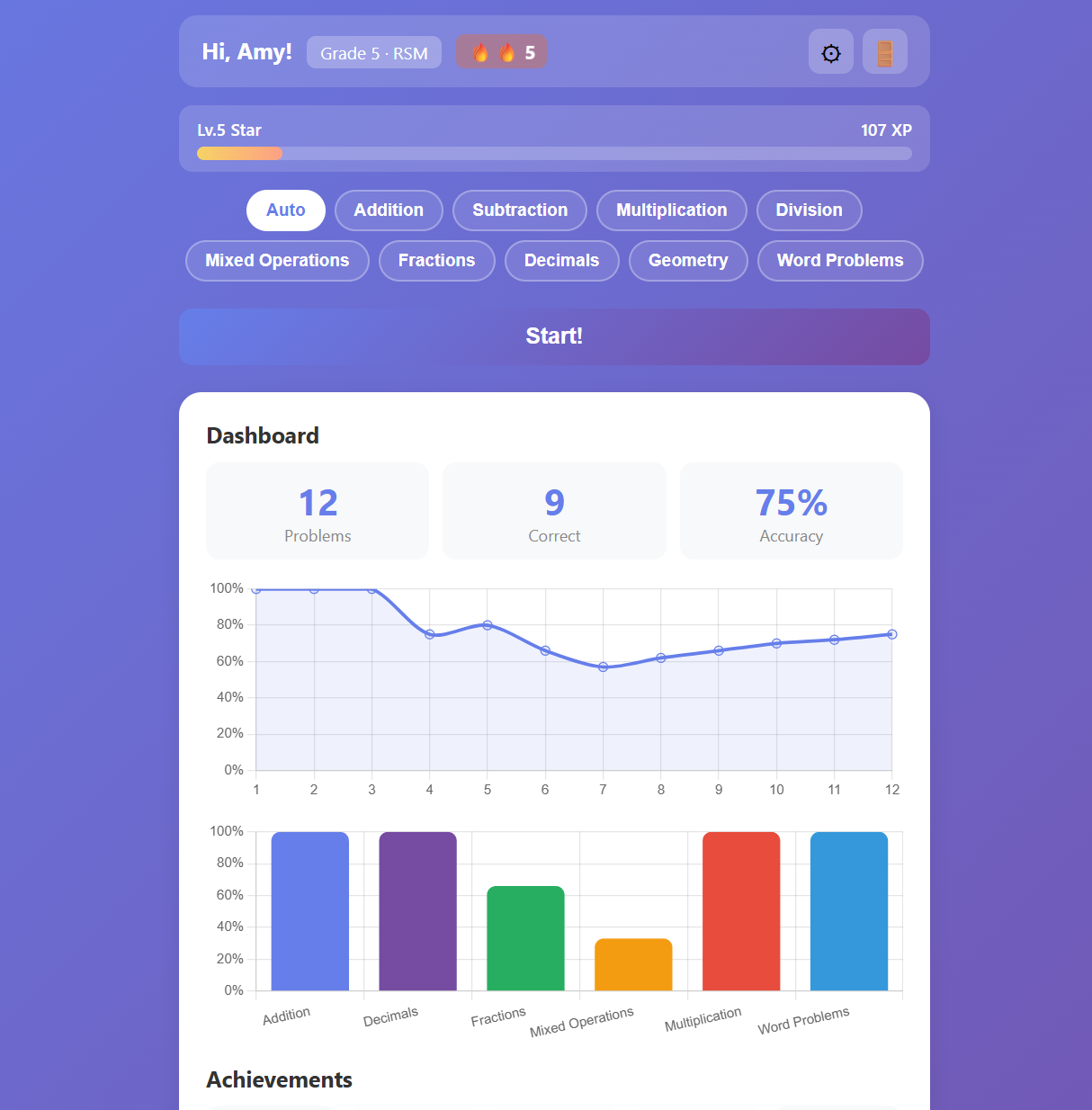

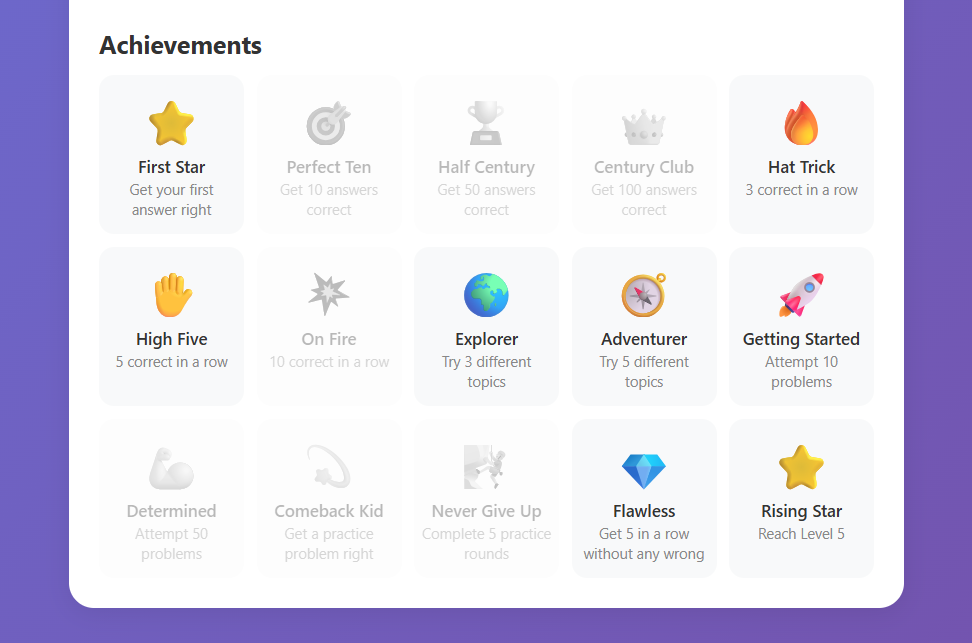

XP, 50 levels, streaks, 15 unlockable badges, and confetti celebrations keep kids motivated.

Caches generated problems to save LLM calls. Same conditions? Instant problem, zero API cost.

Accuracy trends, per-topic performance, achievement overview — all visualized with Chart.js.

Four specialized agents collaborate in sequence, powered by Google Gemini and Ollama.

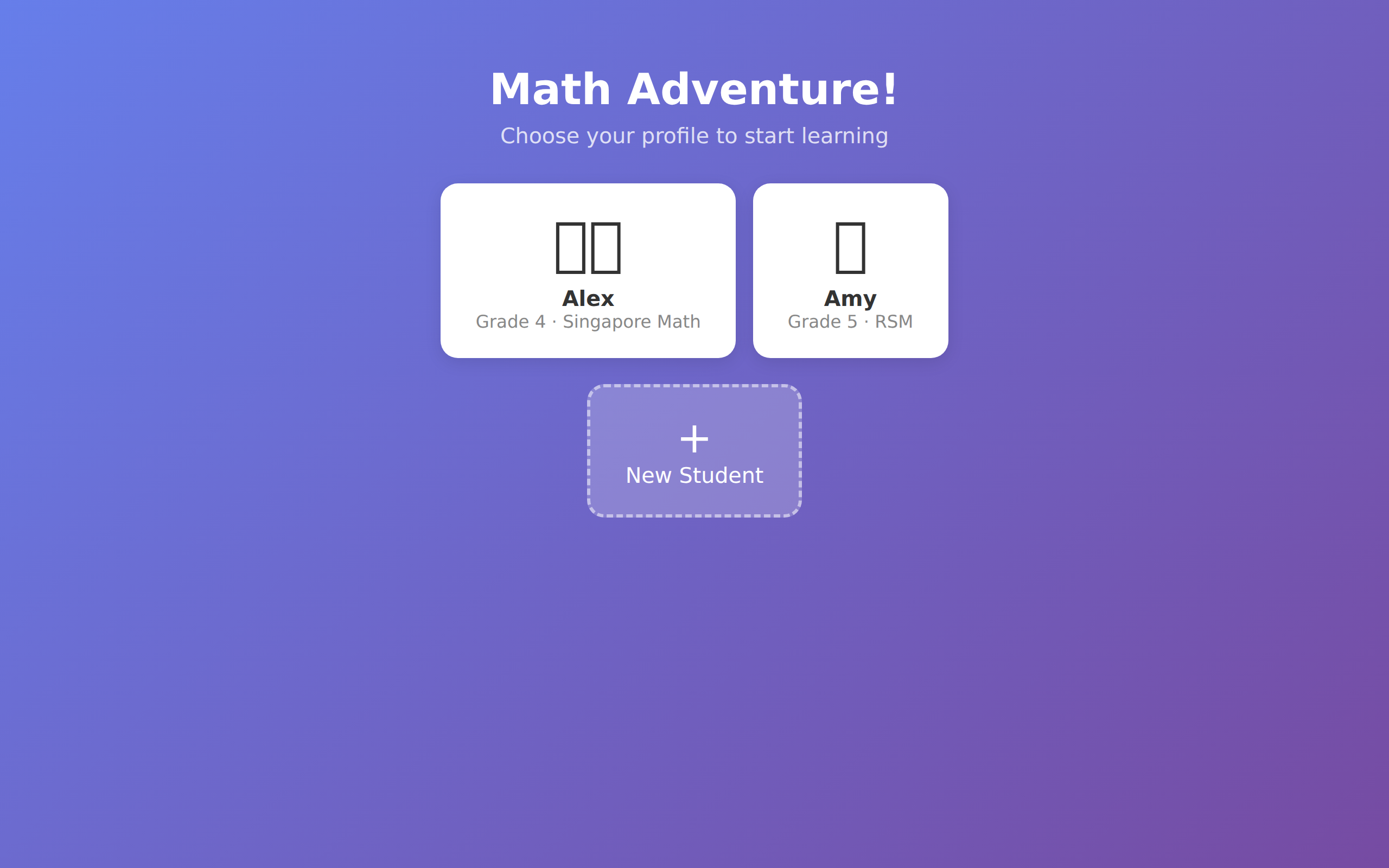

From login to dashboard — every step designed to keep kids engaged.

Charts, stats, and achievements at a glance.

All you need is Python and a free Gemini API key.

US federal law (COPPA, FERPA) places strict limits on how children's data can be collected and used in educational software. MathCrew is built from the ground up to give parents and schools full control.

Run all 4 agents on Ollama — student names, grade levels, answers, and learning history never leave your machine. No external servers, no data transmission, no legal gray area.

Gemini API paid tier does not use your data for model training and supports US region selection (free tier data may be used for product improvement). When cloud is necessary, use the paid tier to keep data private.

COPPA protects children under 13 from unauthorized data collection. FERPA ensures K-12 student data is used only for education. MathCrew's local mode and data controls are designed with both in mind.

Upgrade your local LLM for stronger math reasoning. No cloud required.

| Model | VRAM | Math | Best For |

|---|---|---|---|

gemma3:4b |

~3 GB | Basic | Low-end hardware |

qwen3:8b |

~6 GB | Good | 8 GB GPU |

qwen3:14b ⭐ |

~10 GB | Strong | 16 GB GPU, recommended |

deepseek-r1:14b |

~10 GB | Strong | Math-specialized |

qwen3:32b |

~20 GB | Closest to Gemini | 24 GB GPU (RTX 4090) |